- 6803 Parke East Blvd. Tampa, FL. 33610

- 800.728.4942

- 813-231-6305

Showing 1–16 of 50 resultsSorted by price: high to low

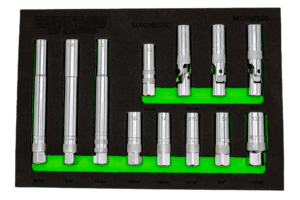

12 Piece 3/8″ Dr. Magnetic Spark Plug Master Set

XL SELF-ADJUSTING OIL FILTER PLIERS (3-3/4’’ ~ 7’’)

Drain Plug Wrench Set, Extra Long 8mm thru 19mm

Digital angle & torque meter 3/8″ + 1/2″ dr.

Spark Plug Master Set, 13 pc set, include XL, Standard and Swivel Spark Plug Tools

Hub and Stud Cleaning Kit – Truck

Hub and Stud Cleaning Kit – Car

78” LIFT GATE / HOOD PROP – GREEN (36” – 78”)

SCREW-ON BEARING PACKER W/ GREASE NOZZLE

5 piece Brake Bleeder Wrench Set, 7mm, 8mm, 9mm, 10mm, & 11mm

Hub/Dust Cap Plier, heavy duty

Up to 4-1/2”

GASKET CLEANING PAD 4 PK – 50MM

Auto adjusting, locking oil filter pliers

E12 TORX® BMW Starter Tool

22MM STUD CLEANING WIRE BRUSH – TRUCK – 4 PACK

7’’ Professional Wire Crimper