- 6803 Parke East Blvd. Tampa, FL. 33610

- 800.728.4942

- 813-231-6305

Showing 1–16 of 51 resultsSorted by price: high to low

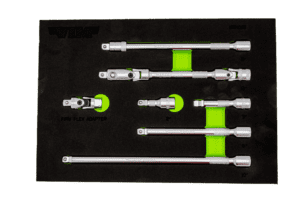

7 Piece 3/8″ Drive Master Extension Kit

Out of stock

TELESCOPIC WRENCH EXTENDER, 18″-26″

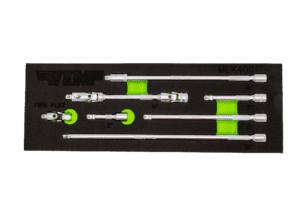

7 Piece 1/4″ Drive Master Extension Kit

4 Piece Firm Flex Dual Drive 1/4” x 11mm UJ Kit

6 PC. IMPACT BIT HOLDER SET W/PURPLE MAGRAIL

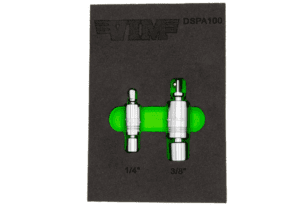

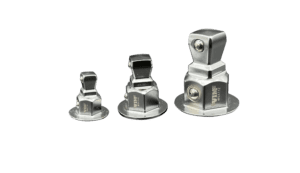

DUAL SWIVEL PINLESS ADAPTER SET – SATIN FINISH

4 Piece Power Driver UJ Adapter Set

1/2″ Dual Swivel Extension, 12 ” long with locking square drive

15″ Wrench Extender

9 in stock

8” L 3/8” DR. DOUBLE JOINT UNIVERSAL LOCKING EXTENSION W/ SPRING-LOADED MALE END

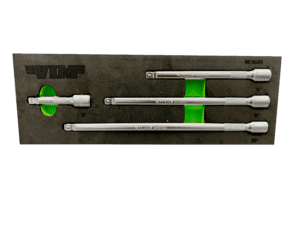

4 Piece 3/8″ Drive Wobble Extension Set

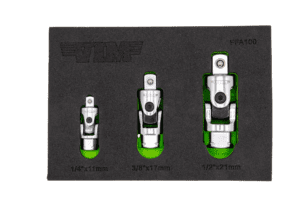

3 PC. FIRM FLEX DUAL DRIVE UJ ADAPTER SET

1/4” X 3/8” DR. DUAL HEAD NANO FLEX RATCHET – 4.5” OAL

1/4” DR. X 1/4” BIT HOLDER DUAL HEAD NANO FLEX RATCHET – 3.5” OAL

3 in stock

3/8″ Dual Swivel Extension, 8 ” long with locking square drive

3 pc. Wobble Socket Adapter Set